The Convergence Problem

I ran across a study in Science late last year that I haven’t been able to set aside. Researchers analyzed more than 2 million papers and found that scientists using AI tools published significantly more work than those who didn’t. Output jumped 59.8% in social sciences and humanities, 52.9% in biology and life sciences.

But the acceptance rates at peer-reviewed journals didn’t keep pace. More papers got submitted and more of them were mediocre. The review system strained under a flood of work that looked polished but didn’t always say anything new.

That pattern shows up anywhere AI tools get used heavily for thinking and creating. Individual productivity goes up but the collective originality of the output goes down.

I think this is a bigger issue than most people realize, especially for companies that compete on the quality of their ideas. I wrote about a related version of this in The AI Productivity Lie discussing the gap between what productivity metrics show and what’s actually happening. This is the same gap, applied to thinking instead of coding.

Large language models predict the most probable next token. They’re optimized for the expected answer: convention over novelty, probability over surprise. That tendency gets reinforced through RLHF (Reinforcement Learning from Human Feedback), which fine-tunes models to land in the center of human preference. Another way to put it: these systems are trained to avoid the edges.

Computer scientists call the downstream effect “mode collapse.” Multiple independent sessions, given similar prompts, converge around similar outputs. A 2024 study put 36 participants through a structured ideation exercise and found that ChatGPT users collectively produced less semantically distinct ideas than users of other creativity tools. They generated more ideas. More detailed ideas. But fewer genuinely different ones.

The researchers also found something worth sitting with: ChatGPT users reported feeling less responsible for the ideas they generated. I’ll come back to that shortly.

This connects to something I’ve been thinking about with critical thinking and AI …when we stop questioning outputs because they look polished, we stop doing the work that matters.

What Does AI Ideation Convergence Mean for Competition?

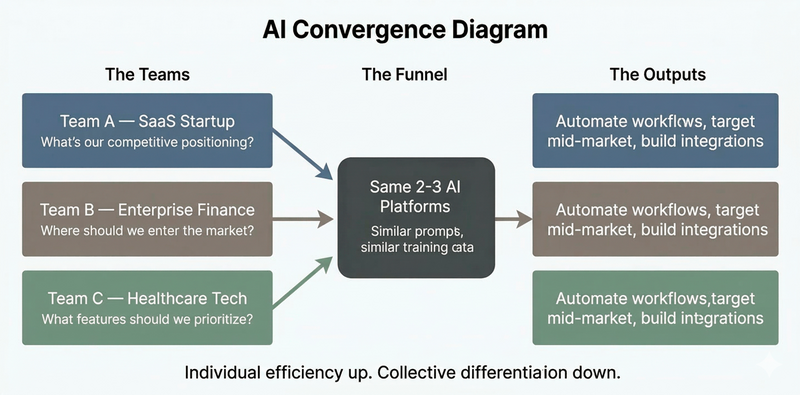

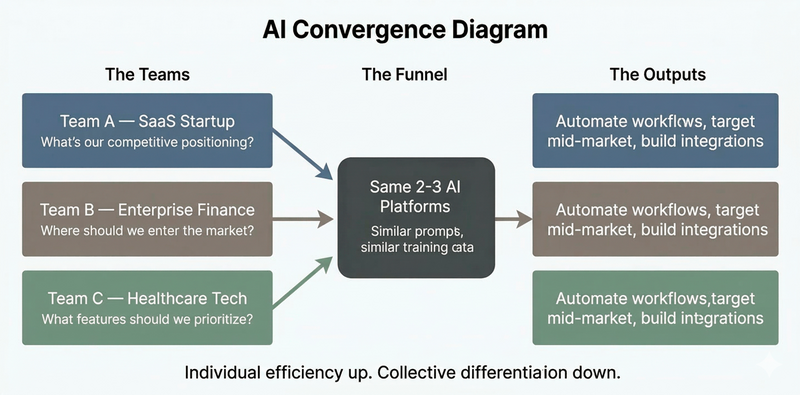

When every team in a given market runs strategic questions through the same two or three AI platforms with similar prompts, the answers cluster. Market entry analyses surface the same opportunities. Product ideation circles the same feature sets. Competitive positioning lands on the same differentiators.

Each of those exercises felt more efficient to the team running it, but across a market where everyone’s running the same exercises, the outputs start to look the same.

Researchers have described this as a “tragedy of the commons” in the marketplace of ideas where each user benefits individually while the collective pool of ideas narrows. A study analyzing 41 million scientific papers published between 1980 and 2025 found exactly this. AI tools boosted individual productivity and the diversity of ideas across the field declined.

If your competitive advantage depends on seeing something others haven’t, and everyone’s staring at the same map, the advantage gets thinner with every prompt.

Something harder to measure is happening too.

The value of good thinking has always been tied to the specific context and experience of the person doing the thinking. A product manager who spent three years watching customers struggle with a workflow sees something in that problem that a model trained on general internet text can’t access. A founder who has failed twice in a particular market carries knowledge that doesn’t exist in any training set.

When those people hand their ideation to a model, they get speed but they lose the friction that produces original thinking: the dead ends, the slow connections, the things that only form when a specific mind sits with a specific problem long enough to see it differently.

Original thinking requires staying in contact with the problem. AI ideation tools, used as a substitute rather than a complement, reduce that contact. The output still looks good. It’s organized, detailed, and confident but collectively, across everyone using the same tools, it trends toward sameness.

Why Idea Ownership Matters More Than Idea Volume

Back to that finding from the ACM study: participants who used ChatGPT for ideation felt less responsible for the ideas they produced.

That matters more than it may seem. Ownership shapes how hard someone fights for an idea, how deeply they understand its implications, how willing they are to develop it past the point where it still feels like someone else’s suggestion. An organization full of people who feel detached from the ideas they’re advancing has a specific kind of problem. Volume goes up, commitment to any particular direction gets diffuse and execution suffers in ways that are hard to trace back to their source.

The metrics that are easy to measure like output volume, time to draft, and ideas per session all go up. The things that are harder to measure like originality, conviction, and depth of engagement move the other direction.

The distinction that matters is where in the thinking process you introduce AI. Using it to pressure-test an idea you’ve already developed is different from using it to generate the idea in the first place. Asking a model to poke holes in your thesis is different from asking it for your thesis.

The first plays to what AI is genuinely good at: range, speed, surface coverage. The second asks it to do something it’s structurally bad at: generating the kind of contextually grounded, friction-born thinking that actually differentiates.

A more practical approach to using AI for ideation/thinking is:

- Don’t bring AI into a problem until your team has spent real time with it

- Define the problem in your own words

- Surface your own constraints

- Develop at least a rough hypothesis

- Then use AI to stress-test it, expand the research, and explore counterarguments

That sequence keeps the contextual engagement that produces original thinking while still capturing the speed.

The other thing worth doing is varying the inputs. Generic prompts produce generic outputs. Prompts grounded in your specific competitive context, your actual customer problems, your particular constraints; those push the model away from its defaults. They don’t solve the convergence problem entirely, but they help.

Teams need to hold onto the expectation that ideation is work, and that the friction in that work is producing something of value. I’m still working out where the line is: how much AI-assisted thinking a team can do before the aggregate effect on their actual judgment starts to show up in the decisions they make.

If your team is working through the challenge of figuring out where AI fits in the thinking process without flattening the thinking itself, I’d be happy to talk it through .