IDC Forecasting Continued Growth in Big Data

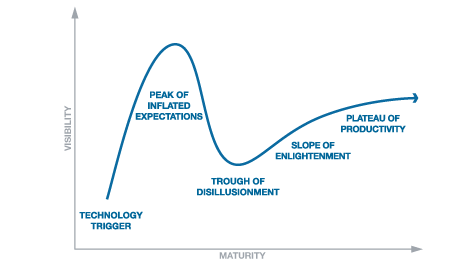

Earlier this week, I wrote “Where are we in the big data lifecycle?” where I said that we are currently somewhere between the ‘technology trigger’ and ‘peak of inflated expectations’ on Gartner’s Hype Cycle for big data.

A newly released report from IDC titled “Worldwide Big Data Technology and